DARPA Urban Challenge 2007: Caltech's Alice

Alice was Team Caltech's entry to the series of DARPA Autonomous Vehicle Challenges from 2005-2007, a massively reinforced Ford E-350 van with a sports chassis, about a dozen rack-mount servers, and 3.5 kW generator (preceded by Bob, a simple '96 Chevy Tahoe).

Alice was originally built for the 2005 DARPA Grand Challenge, which consisted of a 100+ mile race through the desert with variably charted terrain. The car had a notable performance, losing GPS lock and reacquiring it directly on top of the press stand, featured in the video at the bottom of this page.

I joined Team Caltech in April 2007, in the eight months leading up to the third challenge, to take place in an urban environment with road rules, signs, and human (stunt driver) traffic. I started off by programming for the navigation team, implementing the details of lane change maneuvers and getting up to speed on the sprawling codebase for the car. The team consisted of many people half working on the car and half working on their research, leading to a fragmented, poorly-organized and inefficient series of dozens of programs, which collectively brought about 2 kilowatts of Xeon processors to a crawl.

Rendering of a 3D point-cloud taken in the garage

As a result, more by necessity than anything else, I soon moved to the sensing team. There, I began by incorporating a new cruise control radar (TRW AutoCruise AC20) into the car's sensing and mapping platform over a CAN interface, which greatly helped the car's detection ability at long distances and for moving objects. The radar was actually extremely impressive - in road tests, it could track around a dozen total cars simultaneously, in both directions, and was sensitive to person-sized targets traveling at walking speed.

After that, I started moving towards Alice's numerous LIDAR detectors, something like eight 1D line-scan time-of-flight rangefinders placed around the car. In order to accurately correlate the data between the different sensors, it became exceedingly important to know exactly where the sensors were on the car. Given the adjustments that often happened on the car, I found it prudent to develop a utility that used a 3D point cloud generated by the pan-tilt mounted LIDAR, along with specially-placed targets, in order to align and calibrate the multitude of sensors on the car.

After this is when the real work started - coding the program that processes all of the incoming LIDAR scans, combines the data from all of them (including the pan-tilt), and identifies static and moving obstacles. For the navigation logic, it needed to have persistence for moving obstacles (as they passed behind some obstructions) as well as some significant trajectory prediction. There were many details involved in the development of these algorithms; one of the most difficult parts was the identification of road strikes, where the car would dip forward enough that the LIDARs would sweep the road surface ahead of the car. This could often appear as a car headed directly towards Alice in the oncoming lane, and given that avoiding oncoming traffic was one of the elements of the challenge, could not easily be filtered out. Most of the teams that finished the race had Velodyne 3D LIDARs, which removed much of the uncertainty we had to deal with in object detection.

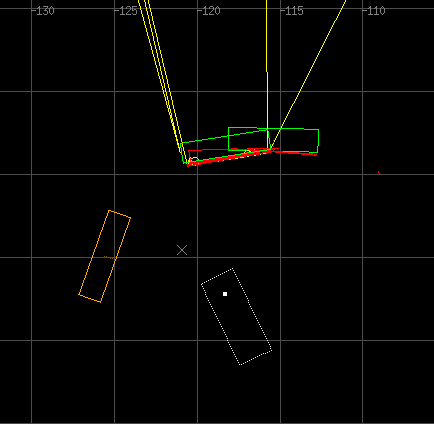

Tracking (green/yellow) and prediction (orange), including turning and acceleration

For more information on the LIDAR processing and tracking, I would point you to my final report on implementing more advanced tracking algorithms, which can be found here:

Much of the work revolved around reaching into the extended Alice codebase and speeding up areas which needed it - for example, collision detection initially didn't use bounding boxes, so you can imagine that when the LIDARs started returning a considerable number of tracked objects, O(n^2) really became a problem. At one point, to track the ground plane, I also had (?) to implement a polygon rasterizer in the C++ preprocessor. I'm really not proud of that.

At any rate, the run-up to the contest was an intense experience, with many all-nighters of coding followed by a full day of testing in a 130+ ºF car (thanks to the A/C being routed to cool the servers). In the end, Caltech's Alice wasn't among the 11 out of 35 entrants to qualify for the final race, of which only 6 teams actually finished. Most of the final six teams had extremely customized cars, sponsored and built by the car companies themselves (CMU's entry had all of the sensors hidden behind custom paneling, for example). Our car was one of the only ones that was a hand-me-down from the desert race, and in fact was physically incapable of completing the parking lot qualifier because the van wouldn't fit in the allotted space. True to form, as in years past, we ultimately got disqualified by trying to ram the nearest humans, in one of the stunt driver based qualifiers.

I was lucky enough to go to the final contest, however, and was witness to the first autonomous vehicle road accident (I'm just off camera somewhere). It seems comical, but it's actually the sort of accident people would get into: Person A stops at a stop sign and is lost, so they check the map (Cornell lost GPS and waited to reacquire); Person B (MIT) follows protocol and waits behind them. After waiting about 30 seconds, they get bored and decide to pass Person A; at this point, Person A decides they know where they're going and start moving again, but fail to check their mirrors and blind spot because they don't expect a car to be there, and Person B takes a second too long to realize Person A has started moving again. They then stop and trade insurance information (rather, DARPA officials come in and assess the situation). Impressively, both teams went on to finish the race.

Now, for your viewing pleasure, some clips of Alice's 2005 bloodthirsty performance (I was not affiliated with the team at this time!):